You ask an AI a precise question about a policy, a spec, or a project update. The response sounds confident, polished, and professional, yet it’s wrong.

In AI, that’s a hallucination. In business, it’s risk.

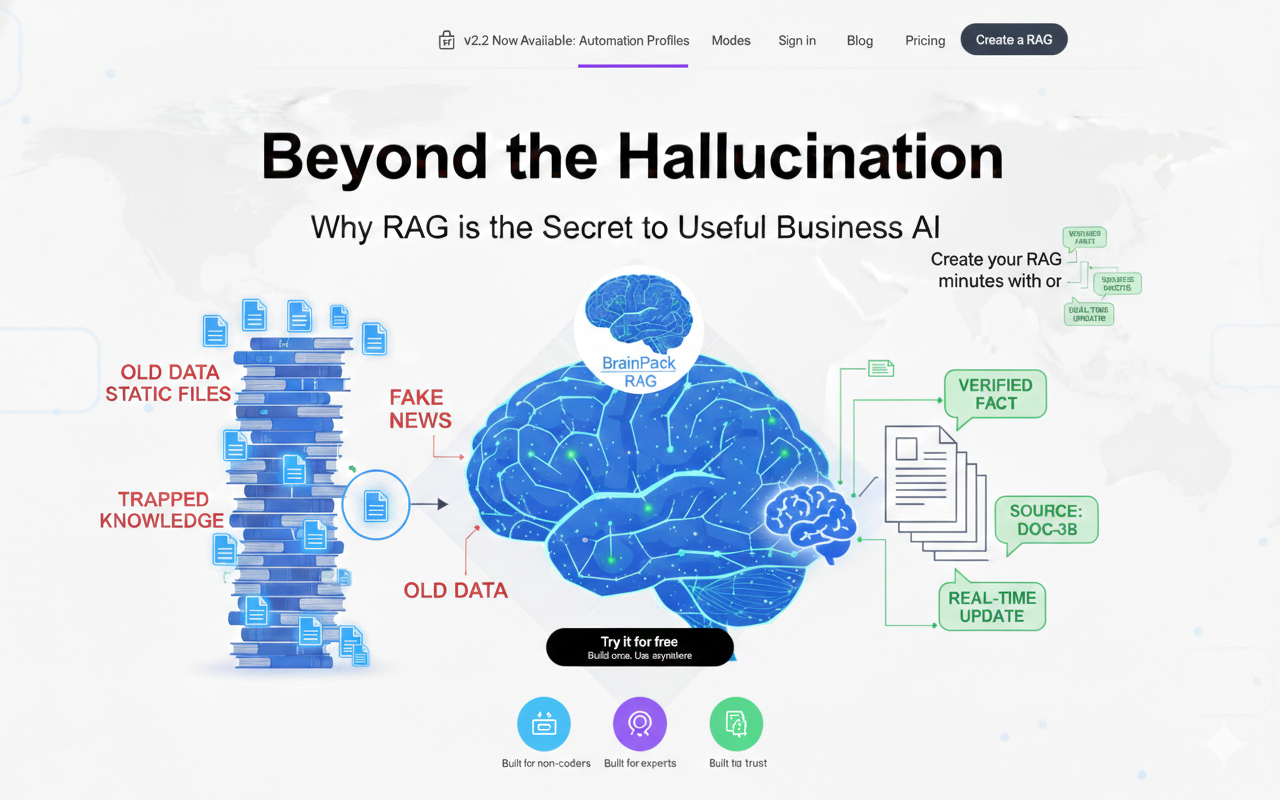

Large Language Models (LLMs) like GPT-4 are powerful, but they operate like “closed books.” Their knowledge is fixed at training time, so they don’t truly know your company’s latest documents, decisions, or internal context.

If you want to move from generic AI to a reliable knowledge engine, you need Retrieval Augmented Generation (RAG). A method that retrieves relevant information from your sources before the model answers.

What is RAG and why it matters for business AI

The “closed book” problem with standard LLMs

Base models generate answers from patterns learned during training. When your question requires up-to-date or company-specific information, the model may guess, generalize, or fabricate.

RAG as an “open-book exam”

RAG changes the rules: before generating an answer, the system retrieves the most relevant snippets from your own data sources (docs, wikis, PDFs, notes). The model then answers using that retrieved context.

Advantage 1: Real-time intelligence instead of static knowledge

Why knowledge cutoffs create business risk

When information changes daily—policies, product docs, pricing, procedures—static model knowledge quickly becomes inaccurate.

How retrieval keeps answers current

RAG doesn’t rely on the model “remembering” the world. It retrieves the latest approved information at query time, so answers reflect what’s true now, not what was true months ago.

Brainpack angle: a dynamic brain

With a Brainpack workflow, once a new update is ingested, it becomes queryable immediately without waiting for retraining.

Advantage 2: Reducing hallucinations and the “confidence trap”

Why fluent answers can still be false

LLMs optimize for coherence and plausibility. That’s why they can deliver incorrect statements with complete confidence. See Brainpack Product →

Grounding: forcing the answer to use evidence

RAG constrains the model: “Answer using only these retrieved documents.” This shifts the output from “plausible text” to “evidence-based response.”

Brainpack angle: not only accuracy—human mastery too

Beyond grounding, Brainpack can turn critical documents into training paths (quizzes, certification-style learning), so the organization’s people learn the same validated knowledge the AI is using.

Advantage 3: Deep context without generic templates

Why “generic AI” sounds right but fails in practice

Ask a standard LLM for a customer support reply and you’ll get a template. Ask it to match your specific refund policy, brand voice, and current roadmap—and it will drift.

RAG retrieves your nuance

When your AI can access your actual policies, product specs, internal playbooks, and decision logs, it responds with specificity: the “why,” the “how,” and the exact constraints your business operates under.

Advantage 4: Build once, deploy anywhere

Fine-tuning is expensive and becomes outdated quickly

Fine-tuning often requires specialized expertise, compute cost, and large datasets. Even then, as soon as your documents change, the tuned model can lag behind reality.

If you want to compare plans before you start: Pricing →

RAG is modular and easier to maintain

RAG is a plug-in architecture: update the knowledge sources and the system updates its answers without retraining a model.

Brainpack angle: portability and ownership

A Brainpack approach emphasizes portability: export your validated knowledge corpus (e.g., JSONL/CSV/registry-style structures) and deploy it into your own stack, agents, or apps. You keep ownership of the engine; the tooling helps you build and maintain it.

Conclusion: Information is data. RAG turns it into capital.

Information trapped in static PDFs is a liability. Information transformed into a portable, queryable, validated RAG engine becomes intellectual capital—usable across support, operations, onboarding, and decision-making.

RAG is the practical step that makes business AI reliable, transparent, and truly useful.

Next steps

- If your LLM is guessing, this is the roadmap to fix it.: RAG AI Guide →

- See the packaged workflow: Brainpack Product →

- Choose a plan. Pricing →

Related articles (coming soon):

Brainpack vs RAG Systems

Brainpack vs Knowledge Bases

Brainpack Architecture

How to Create a Brainpack